Outbound sales haven’t changed that much in the last 100 years: You get a contact, send an offer, and wait to see if the lead converts. But before any sale happens–whether via snail mail, email, or a direct call–a salesperson has to get to the contact.

Automated data gathering has been the spine of online information since Google started dominating the search market in the early 2000s. It extracts data from websites according to predefined rules, with little to no manual intervention. The exact collection methods vary greatly site by site; but, unless a website provides API access, it mostly falls under the umbrella term of web scraping.

You may not always see it, but automated data gathering is deeply entrenched into the modern sales process. Coupled with advances in machine learning, it can free salespeople from some of the menial, but necessary, tasks allowing them to focus on what they do best – make sales.

Gathering Data

In the past, data gathering was all manual. Salespeople would use telephone books and public listings as easy pickings. The process was slow, but you could turn the pages of a telephone book and use the data however you liked. In the digital space, you can jump into someone’s LinkedIn profile and take the information you need to contact and research them as a sales lead.

Nowadays, automated scripts get to the data almost instantly and at a huge scale. All profiles and public listings that are accessible to humans can get scraped in a couple of days.

Sales teams started automated data gathering early, with solutions to scrape yellow page listings for contact information. Developers would create scripts that, much like Google, would access a website and extract relevant data: names, phone numbers, email addresses, etc. As yellow pages and other listing sites began blocking scrapers, they started using proxy servers to avoid IP blocks.

“Legally, web scraping is in a gray area. In the US, a scraper must ensure that the data they’re getting is factual and not copyrighted. They must adhere to the robots.txt files and any Cease & Desist letters. It’s a lot to take into account before you start setting up the software or create a product from that data.” — Johan, technical lead at web scraping proxy network Smartproxy.

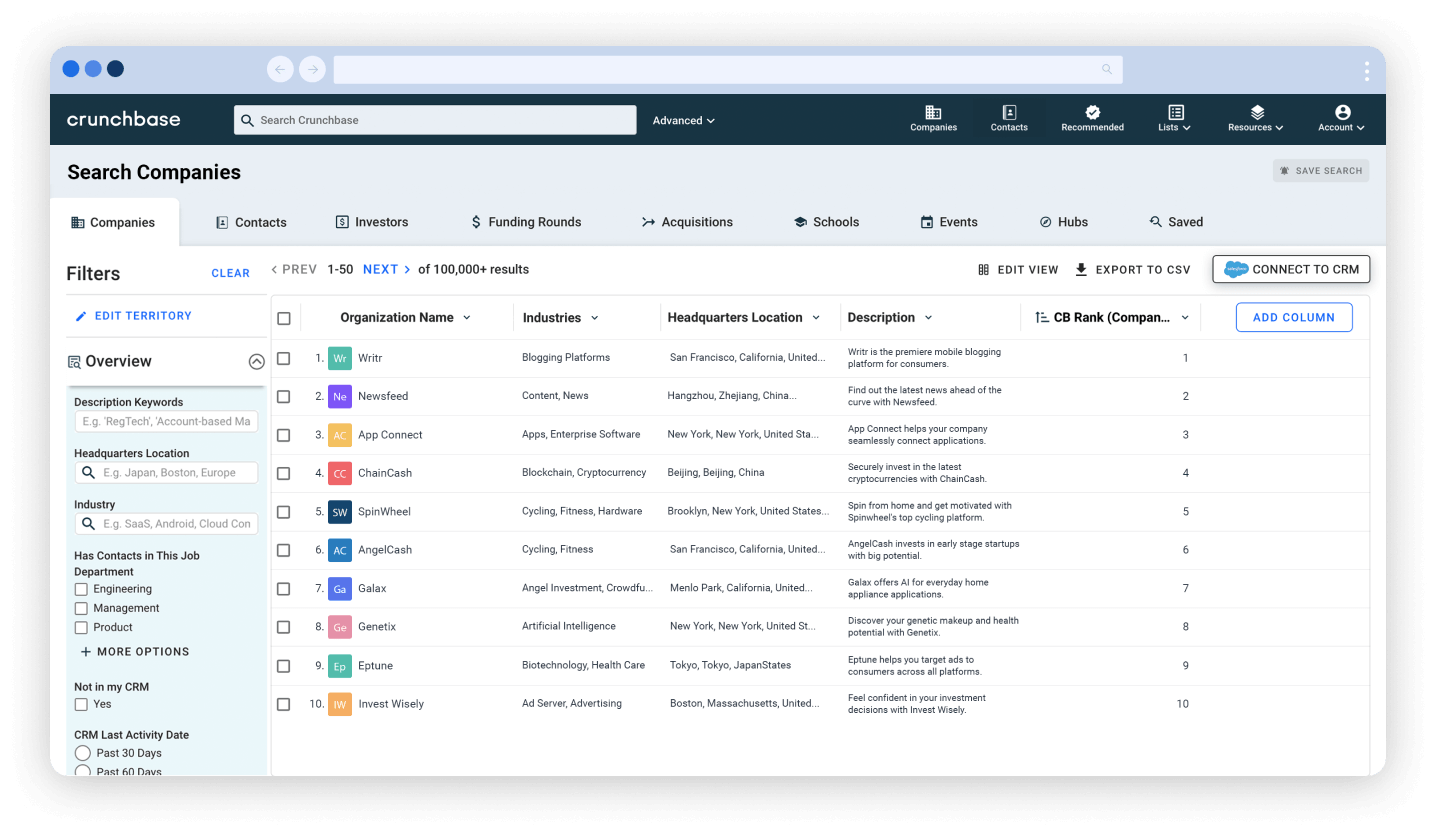

On top of automating the manual navigation and data gathering process, some companies choose to provide aggregated information as a product. For many salespeople, services like Hunter and Clearbit are daily staples, as they already have large databases of leads from varying data sources (many of those contacts got there voluntarily).

The Gold Nugget

Getting a contact, researching the contact person, and preparing for outreach are key sales processes. But they take time away from tangible tasks such as calls or emails. You can spend time digging around to find out how well the lead’s company is doing financially, determine if they might have a criminal background, etc.

Lead research is crucial, as it lets a salesperson use a scenario fit for the contact’s social position or professional context. Research for international sales might include time zones and the best times to call. You can never have too much data.

Automating Lead Research

With several natural language processing algorithms, sales teams are able to better research leads or detect risky contacts. For example, Google’s open-sourced BERT algorithm can scan through news articles that mention a specific person and perform a sentiment analysis to check for a negative reputation. Others can examine whether the leads were ever associated with criminals, competitors, or a target market vertical.

If a web scraper gathers structured data (such as in the form of javascript object notation), a machine-learning algorithm can be programmed to understand such data. Then, it can not only extract names, email addresses, locations, or other contact information, but also create network graphs linking those persons to data gathered from other sources by using link analysis.

In the end, the result is acceptable: Sales teams get leads with certain features already pre-qualified. This level of automation is far from perfect, but it does save a lot of time and energy. Some companies claim that automation has saved over 20 percent of their sales time.

Defining What You Can And Cannot Automate

Sales is still a very human process. Direct mail, spam emails and robocalls are not true sales techniques. Automating the personal part of the process is what made people put up “No Soliciting” signs on their doors in the first place.

As a technical person who sees these processes from the back end, I feel data gathering has earned the scrutiny it gets. Nevertheless, you should never ignore legal technical solutions that potentially give a substantial edge over the competition. I see them as a way to reduce boring work: Automate what you would gather manually anyway, then use the data to further automate other menial tasks.

As I see it, we’re not even close to beating the Turing test–creating an AI able to seamlessly communicate with a person for an extended period of time. For that reason, it’s still best to leave outbound sales to real salespeople.